Quantum Learning

My close associates know that I’m always eager to learn new things. My general tendency is to revisit philosophy, history, and religion, but times are changing, and new developments are evolving so rapidly that I need to look forward: I need to understand the world of artificial intelligence. This rabbit hole has taken me into tunnels I haven’t encountered before. It has made me dangerous. I don’t know what I’m writing about. Inconstant podcast listeners have heard me say that I don’t know what I’m thinking until I read what I have written, so today I’m jotting down some scattered information I’ve learned so far about artificial intelligence, quantum computing, and other fascinating areas as I dive deeper into this labyrinth of unexplored topics. What I write here may be totally wrong. But I’m writing about it anyway as I learn. I’m calling it by a new term I just invented—quantum learning. What I have written below was done in an afternoon; that’s why I call it quantum learning. Unfortunately, like the quantum computer, my brain may have overheated, and all the stuff below is entirely wrong. That’s OK. I’ll sort it out later.

The bit is the most basic unit of information in computing and digital communication. The name is a portmanteau of binary digit. In linguistics, a blend—also known as a blend word, lexical blend, or portmanteau—is a word formed by combining the meanings and parts of the sounds of two or more words. English examples include smog, coined by blending smoke and fog, and motel, from motor (motorist) and hotel. Or a bit from binary and a digit.

The bit represents a logical state with one of two possible values. These values are most commonly represented as either “1” or “0”, but other representations such as true/false, yes/no, on/off, or +/− are also widely used. A binary digit, represented as either 0 or 1, is used to convey information in classical computers. When averaged over its two states (0,1), a binary digit can signify up to one bit of information content, with a bit being the fundamental unit of information.

In classical computer technologies, a processed bit is represented by one of two levels of low direct current voltage. When switching from one of these levels to the other, a so-called “forbidden zone” between the two logic levels must be crossed as quickly as possible. The inability to cross the forbidden zone fast enough limits classical computer technologies.

Most models of quantum computation require the use of qubits. While the state of a bit can only be binary (either 0 or 1), the general state of a qubit according to quantum mechanics can be an arbitrary coherent superposition of all computable states simultaneously.

Quantum superposition is a fundamental principle of quantum mechanics that states that linear combinations of solutions to the Schrödinger equation are also solutions of the Schrödinger equation. This is because the Schrödinger equation is a linear differential equation in time and position. I will not discuss the Schrodinger equation, because I don’t understand the math.

In quantum computers, a qubit is the analog of the classical information bit, and qubits can be superposed. Unlike classical bits, a superposition of qubits represents information about two states in parallel. Controlling the superposition of qubits is a central challenge in quantum computation. Qubit systems, such as nuclear spins with a small coupling strength, are robust against external disturbances; however, the same small coupling complicates the readout of results.

While measuring a classical bit does not disturb its state, measuring a qubit destroys its coherence and irrevocably disturbs the superposition state. One bit can be fully encoded in one qubit. However, a qubit can hold even more information, such as up to two bits through superdense coding.

The DiVincenzo criteria are conditions essential for constructing a quantum computer, proposed in 1996 by theoretical physicist David P. DiVincenzo—a concept initially introduced by mathematician Yuri Manin in 1980 and physicist Richard Feynman in 1982—to efficiently simulate quantum systems. The DiVincenzo criteria comprise seven conditions that an experimental setup must meet to effectively implement quantum algorithms. All of this involves mathematical computations that I don’t understand.

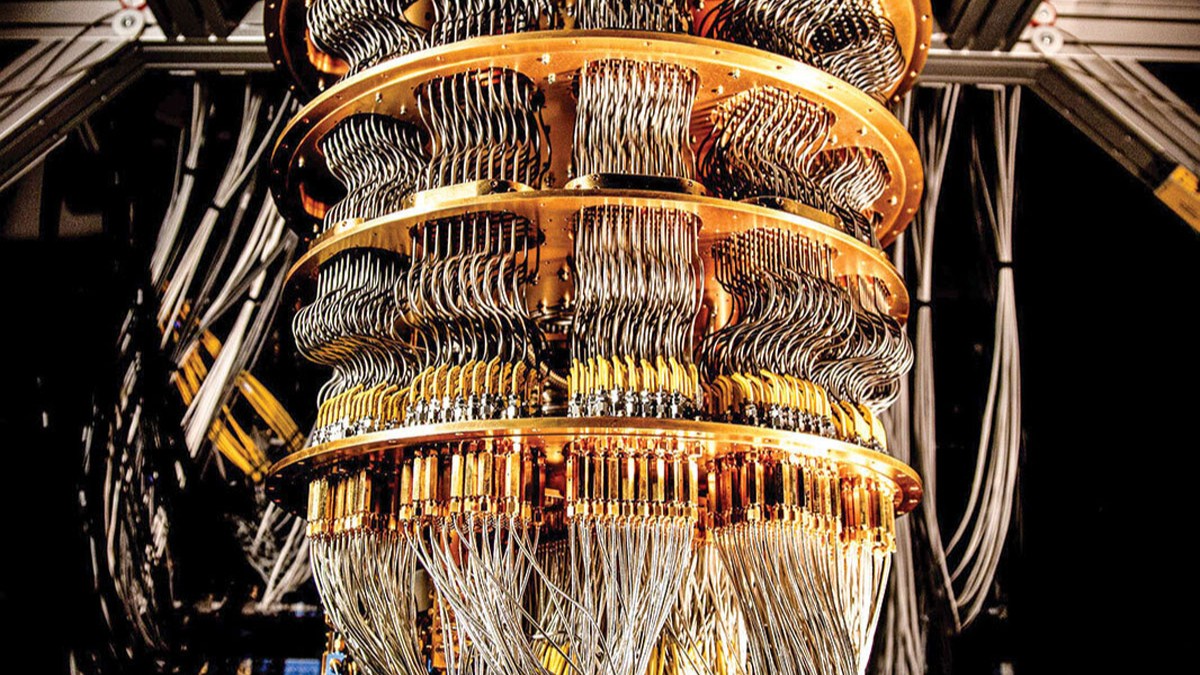

Numerous proposals have emerged for constructing a quantum computer, each facing varying levels of success with the different challenges involved in building quantum devices. Some of these proposals utilize superconducting qubits, trapped ions, liquid and solid-state nuclear magnetic resonance, or optical cluster states. While they show promise, they also encounter issues that hinder their practical implementation.

Nuclear magnetic resonance quantum computing (NMRQC) is one of several proposed methods for building a quantum computer that utilizes the spin states of nuclei within molecules as qubits. The quantum states are probed via nuclear magnetic resonance, enabling the system to be implemented as a variation of nuclear magnetic resonance spectroscopy. NMR is distinct from other quantum computers because it employs molecules, rather than relying on a single pure state.

I intended to elaborate on the quantum computer, which would need me to study much more material on the topic, but I got sidetracked by a rabbit hole about the bit.

A digital device or another physical system can store a bit. These may include the two stable states of a flip-flop, the two positions of an electrical switch, two distinct voltage or current levels allowed by a circuit, two different levels of light intensity, two directions of magnetization or polarization, the orientation of reversible double-stranded DNA, and so forth.

The earliest example of a binary storage device was the punched card , invented by Basile Bouchon and Jean-Baptiste Falcon in 1732, and subsequently adopted by early computer manufacturers, such as IBM. A variant of that idea was perforated paper tape. In all these systems, the card or tape carried an array of hole positions; each position could be either punched through or not, thus representing one bit of information.

The encoding of text using bits was also seen in Morse code and early digital communication machines such as teletypes and stock ticker machines. The first electrical devices for discrete logic were elevator and traffic light control circuits, telephone switches, and electrical relay switches that could be either “open” or “closed.” These relays functioned as mechanical switches, physically toggling between states to represent binary data and forming the fundamental building blocks of early computing and control systems.

When relays were replaced by vacuum tubes in the 1940s, computer builders experimented with various storage methods, such as pressure pulses traveling down a mercury delay line, charges stored on the inner surface of a cathode-ray tube, or opaque spots printed on glass discs using photolithographic techniques.

In the 1950s and 1960s, these methods were largely replaced by magnetic storage devices, including magnetic-core memory, magnetic tapes, drums, and disks, where a bit was represented by the polarity of magnetization in a specific area of a ferromagnetic film or by a change in polarity from one direction to another.

The same principle was later applied in the magnetic bubble memory developed in the 1980s and continues to be found in various magnetic strip items such as metro tickets and some credit cards. In modern semiconductor memory, such as dynamic random-access memory or solid-state drives, the two values of a bit are represented by two levels of electric charge stored in a capacitor. In certain types of programmable logic arrays, a bit may be represented by the presence or absence of a conducting path at specific points in a circuit.

The barcode was invented by Norman Joseph Woodland and Bernard Silver and patented in the US in 1952. The invention was based on Morse code which was extended to thin and thick bars. However, it took over twenty years for this invention to become commercially successful. A barcode is a method of representing data in a visual, machine-readable format. Originally, barcodes encoded information by varying the widths, spacings, and sizes of parallel lines. These barcodes, commonly referred to as linear or one-dimensional (1D), can be scanned by specialized optical scanners called barcode readers, which come in several types.

Later, two-dimensional variants were developed using rectangles, dots, hexagons, and other patterns, known as 2D barcodes or matrix codes, although they do not actually use bars. Both can be read by purpose-built 2D optical scanners, which come in several different forms. Matrix codes can also be scanned by a digital camera connected to a microcomputer running software that captures a photographic image of the barcode and analyzes it to deconstruct and decode the information. A mobile device with a built-in camera, like a smartphone, can serve as the latter type of barcode reader using specialized application software and is suitable for both 1D and 2D codes.

A QR code, quick-response code, is a type of two-dimensional matrix barcode invented in 1994 by a Japanese company for labeling automobile parts. It consists of black squares on a white background with fiducial markers, which can be read by imaging devices like cameras.

The initial alternating square design developed by the research team led by Masahiro Hara was inspired by the black and white counters used in a Go board*; the arrangement of the position detection markers was established by identifying the least-used sequence of alternating black and white areas on printed materials.

[*The Go board, known as the goban in Japanese, is the surface used for placing stones. The standard board features a 19×19 grid. To play the game of Go, you need the board, stones (the playing pieces), and bowls for the stones.]

The necessary data from the black and white sequence is extracted from patterns present in both the horizontal and vertical components of the QR image. In optical discs, a bit is encoded as the presence or absence of a microscopic pit on a reflective surface. In one-dimensional barcodes and two-dimensional QR codes, bits are represented by lines or squares that can be either black or white.

As of 2024, QR codes are utilized in a much broader context, such as in mobile phone devices. QR codes can display text, open a webpage, add a vCard contact to the user’s device, open a Uniform Resource Identifier (URI), connect to a wireless network, or compose an email or text message.

An optical disc is a disc-shaped object that stores information in the form of physical variations on its surface, which can be read using a beam of light. Optical discs can be reflective, with the light source and detector on the same side of the disc, or transmissive, allowing light to pass through the disc to be detected on the other side. Optical discs can store analog information (LaserDisc), digital information (DVD), or both on the same disc (CD Video). Their primary uses include the distribution of media and data.

This is all I learned in three hours of study. That’s why I call it quantum learning–fast reading that produces a lot of gobbleygook. When I study what I wrote maybe I will make a little bit better sense of the material. I apologize for the length and the plagiarism, but I didn’t have time to shorten it and write it into a coherent article. Anyway, I have it down so I can study what I wrote and make some sense out of it.